There are scientists and there are synthesists.

This kind of thing is what interests me right now, although not in the context of Inform. I wouldn’t want to take on the task of modeling conversation with this sort of parser, but it’s not incredibly daunting to work out a way for a user to interact with a Zork-style environment. Ultimately, the point of the parser is to take text from the user and turn it into a function that acts on the world state, and there aren’t that many of those functions for a given game. It’s certainly not easy, but it’s not impossible. On the other hand, the default I6 library of verbs is pretty straightforward in syntax and scope (at least, if you’re not getting into topics and other loosely constrained input), and we’re usually just looking at imperative sentences that have no adverbs, only a few heavily constrained prepositional phrases, no subordinate clauses, a few heavily constrained pronouns, refer only to things in scope, etc. The general problem of parsing natural language is certainly very hard I’ve given up on finding any consistency in what the I7 compiler expects, for example, and that’s geared towards a very specific task.

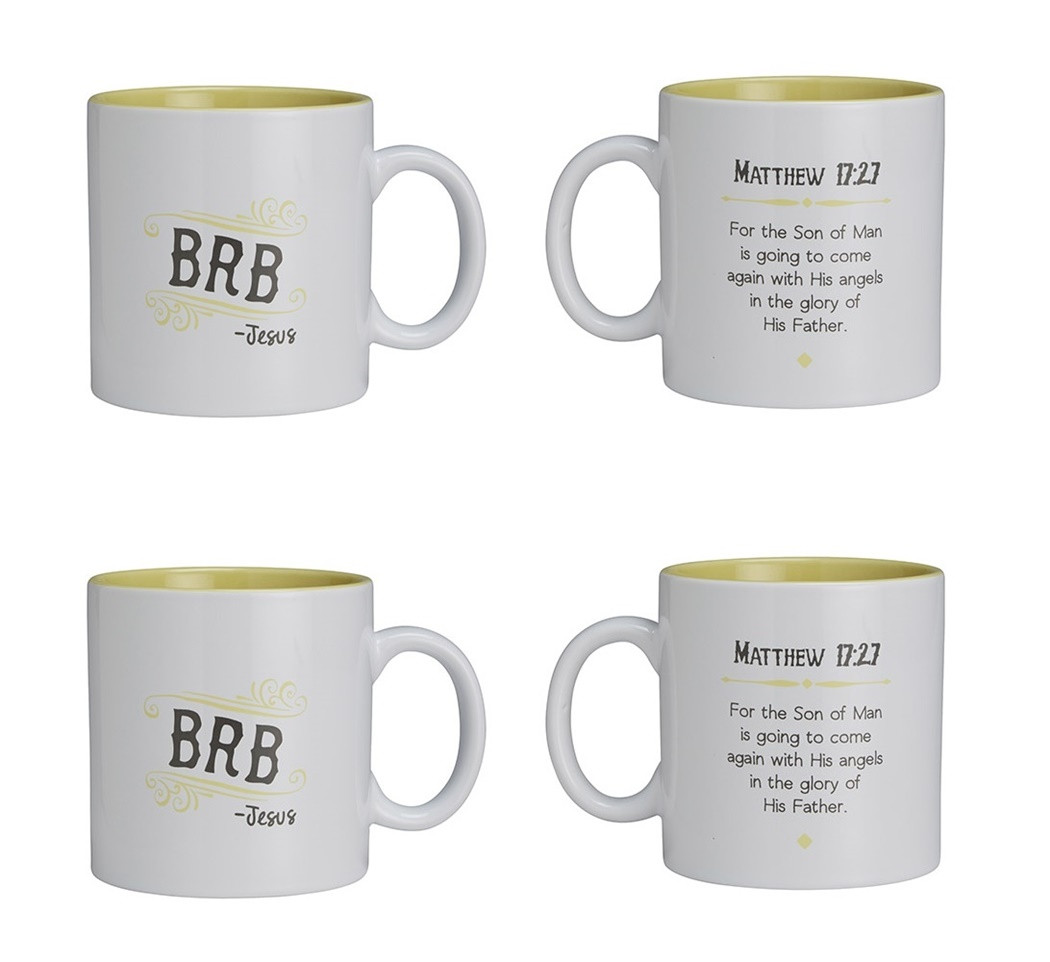

#TEXTIFY MUGS CODE#

The I6 parser code is, to be frank, almost illegible in places, with a complicated series of goto statements, no real indication of what state exactly it’s keeping between the different functions, and functions that aren’t normally encountered in developing an I6 game and don’t have much documentation in the Inform Design Manual.Īs for resolving the semantic content of commands, that’s actually not that bad with such a restricted grammar and world model. Say something new, from the ground up?īodily replacing the I6 parser is feasible but nontrivial. Not knowing the internals of I6 how easy would it be to completely replace the existing parser with a new one. The scope of the problem is broad even if restricting to a IF settings, and it’s tricky to ensure predictability in the system output. I also like the idea in princple of using machine learning to solve the general parsing problem, but it’s very difficult to pull off in practice. I’m not sure how representative those blogs are, though, so: Is this an active problem in IF that people care about, or is it just something that we’re resigned to? Is there any interest, though, in revising the parser for Inform? I get the impression from reading various IF blogs that the consensus is that this is an inherent problem with parser-based text-adventures, and the options are just to accept it as an inherent limitation or to switch to a choice-based approach. My last game was in Inform 7, which makes it much harder to get at lower-level features, and so I put those changes back on the drawing board. I’m not referring to simply adding new verbs or tokens here, but slightly more low-level stuff like having the parser try all lines of grammar for a given verb before returning an error.

#TEXTIFY MUGS HOW TO#

In this tutorial, I’m going to show you how to turn on Enhanced Dictation in OS X and take advantage of speech-to-text, even when you're off the grid.A while back, I added some hacks to the standard Inform 6 parser to fix various issues with it (or things I considered to be issues, at least). That is: continuos, streaming dictation with live feedback is made possible. Because voice recognition processing runs locally on your Mac, text appears instantly as you speak. In other words, server-based Dictation lets you dictate without an active Internet connection. On the Mac, computing resources like CPU power, battery life and RAM are not of paramount importance as on mobile, Therefore, OS X Mavericks provides a new Enhanced Dictation feature which converts your words to text without utilizing Apple’s servers. iOS devices have limited computing power so the Dictation feature on the iPhone, iPod touch and iPad requires network connectivity in iOS 7 (iOS 8 supports streaming voice recognition and 22 new languages).

This is much like iOS’s Dictation feature as both iOS and OS X use the same Nuance-powered technology that turns speech to text. You can use “speakable items”, basically a set of spoken commands, to open apps, choose menu items, email contacts and convert whole spoken sentences to text, wherever you can type text.

#TEXTIFY MUGS MAC#

OS X includes a nifty Dictation feature which allows you to control your Mac and apps with your voice.